Assurance Lab

Test AI outputs before they go live.

Run AI replies, actions and workflow updates through realistic scenarios before they are used in sensitive work. Create pilot-ready evidence before live use.

test draft replies

test proposed actions

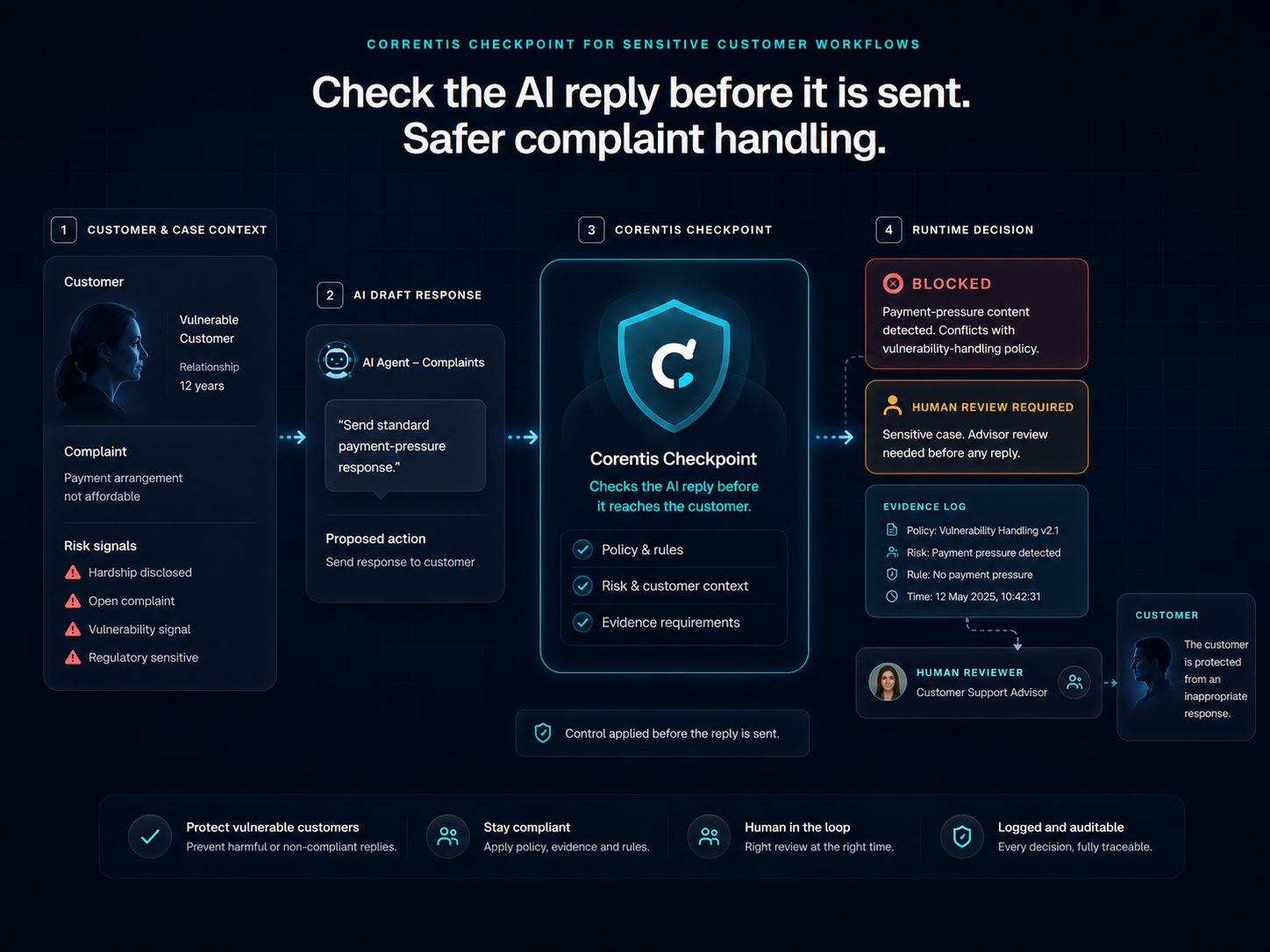

spot risky outputs

find missing evidence

identify where human review is needed

create a report before deployment

A practical way to test before live use

Choose one workflow

Run realistic scenarios

Capture AI replies and actions

Find risk and evidence gaps

Compare with the Corentis Shield path

Create a pilot-ready report

Signals teams can discuss

Measured

Risks surfaced

Measured

Review points found

Measured

Evidence gaps identified

Measured

Outputs blocked before use

Measured

Pilot-ready report

From pilot tests to benchmark asset

Each tested workflow can add to a wider regulated AI output benchmark: scenarios, risk labels, expected decisions, evidence requirements and model-output results.

Evidence reviewTesting turns AI risk into evidence teams can discuss.

scenario library

output evaluation

expected decisions

risk labels

evidence requirements

benchmark reports

Useful for funding, pilots and internal review

Assurance Lab turns AI risk into scenario testing, output evaluation and evidence people can review before live use.